AI Isn't Breaking Your Business. It's Exposing It.

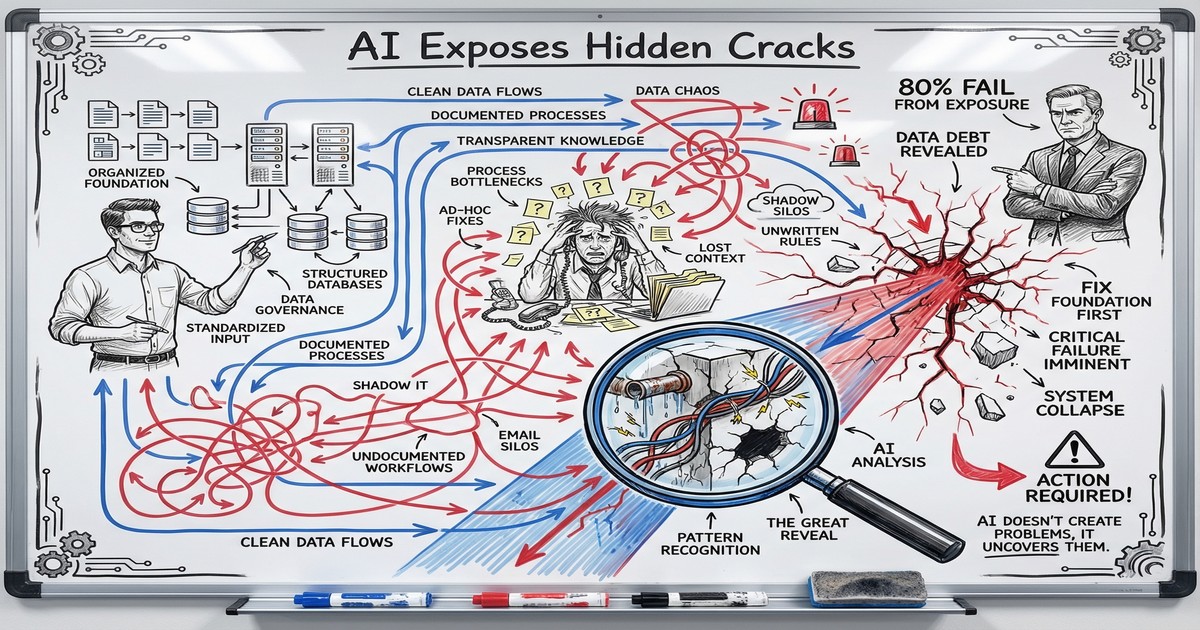

80% of AI projects fail, but the technology works fine. The real problem is messy data, undocumented processes, and organizational dysfunction that AI makes impossible to ignore.

The Factory That Changed Nothing

When electricity first arrived in factories, owners did something predictable. They ripped out the steam engine and dropped an electric motor in its place. Same floor plan. Same belt-driven machines. Same workflow.

Productivity barely moved.

It took an entire generation before manufacturers realized the point. Electricity meant you could redesign the whole factory. Smaller motors at each workstation. Flexible layouts. Natural light. Only then did output double and triple.

Most companies deploying AI today are making the exact same mistake. They bolt a language model onto a broken process and expect transformation. Then, when the results disappoint, they blame the technology.

The technology works fine. Your organization might not. And that is precisely why AI projects fail at twice the rate of regular IT projects, according to RAND Corporation research. Over 80% of AI initiatives stall or fail entirely. The pattern is consistent across industries, company sizes, and use cases.

AI exposes what was already broken.

Why AI Implementation Challenges Start with Your Processes

AI is the most honest audit your business will ever get. It cannot improvise around a broken handoff. It cannot “just know” what Karen in accounting means when she labels an expense “misc.” It cannot read the sticky note on someone’s monitor that holds the real workflow together.

Every workaround, tribal knowledge shortcut, and undocumented process that your team navigates on instinct becomes a wall for AI. And the wall is loud. It shows up as bad outputs, wrong predictions, and wasted spend.

Bill Gates put it plainly: “Automation applied to an efficient operation magnifies efficiency. Applied to an inefficient operation, it magnifies the inefficiency.”

Consider a real example. A major retail chain deployed AI to optimize employee scheduling. Smart move on paper. The system analyzed historical data, forecasted demand, and generated optimized schedules. Managers overrode 84% of the AI-generated schedules. The reason had nothing to do with the algorithm. Employee availability data was wrong. Role classifications were outdated. Store-specific rules lived in managers’ heads, not in any system. The AI performed exactly as designed. The organization fed it garbage.

This is why understanding what happens when your rules break matters before you introduce any AI system. Automation amplifies whatever it touches, including the mess.

The Data Quality Crisis Behind AI Project Failures

Here is the stat that should concern every business leader: 70% of AI project failures trace back to data quality issues. Not model selection. Not compute power. Not prompt engineering. Data.

And the data problem is massive. IBM estimates that US businesses lose €2.8 trillion annually from poor data quality. Gartner found that 63% of organizations lack the right data management practices for AI. Your CRM has duplicates. Your project management tool has inconsistent naming. Your financial data lives in three spreadsheets that contradict each other.

None of this mattered much before AI. Humans are remarkably good at compensating for messy data. Your sales manager knows that “Acme Corp” and “ACME Corporation” and “acme” are the same client. Your project lead knows that “Phase 2” in one project means something completely different from “Phase 2” in another.

AI does not know any of that. It treats each entry as distinct. It makes predictions based on what the data says, not what you meant. And when the output is wrong, the team loses trust in AI rather than questioning the data that produced it.

This is one reason CRM integrations hit unexpected walls. The integration itself works. The data underneath does not support what you are trying to do with it.

Three data problems that kill AI projects before they start:

-

Duplicate and inconsistent records. If your team enters client information differently depending on who is typing, every AI analysis built on that data will be skewed. A customer segmentation model trained on dirty CRM data does not give you bad AI. It gives you an accurate reflection of your bad data practices.

-

Missing context. AI cannot infer the “why” behind a data point. If your project tracking shows a task took 40 hours but the scope changed three times mid-project, the AI will learn that similar tasks take 40 hours. That is wrong, and it will propagate that error into every future estimate.

-

Siloed systems. Your marketing data lives in one platform. Sales data in another. Finance in a third. AI needs connected data to find patterns. When you force it to work from a single silo, you get single-silo insights, which are usually misleading.

The Organizational Readiness Gap: Why 42% of Companies Abandoned AI

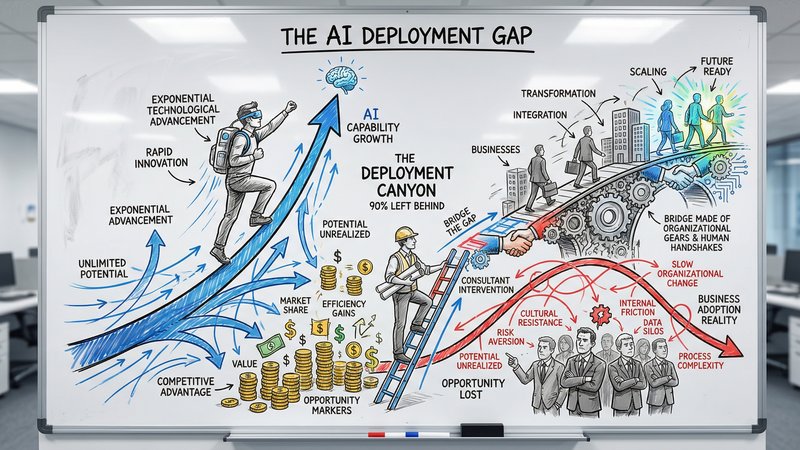

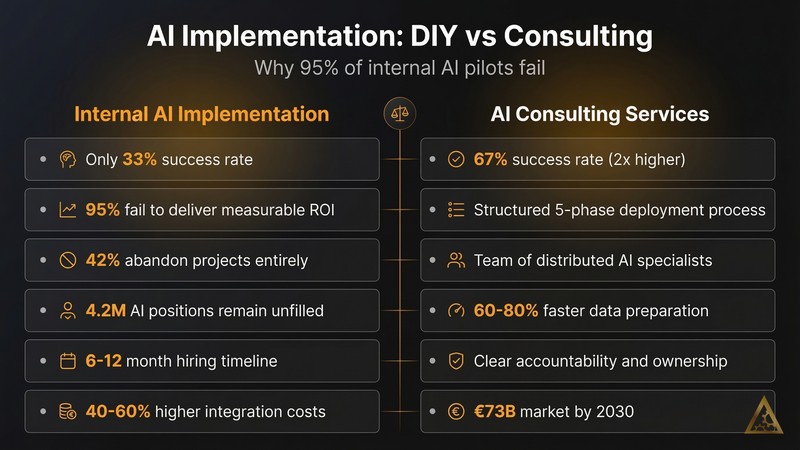

The numbers tell a stark story. In 2024, 17% of companies had abandoned their AI initiatives. By 2025, that figure jumped to 42%. Nearly half of all companies that tried AI decided to walk away.

MIT research from 2025 found that 95% of generative AI pilots fail to deliver ROI. Not “underperform.” Fail.

Meanwhile, a small group of organizations is capturing almost all of the value. Only 14% of companies are genuinely prepared for AI, but that 14% captures 70% of AI’s total financial value. The gap between prepared and unprepared companies drops off like a cliff.

What separates the 14% from the rest? It comes down to organizational readiness, not technical sophistication. Organizations with mature, documented processes achieve 2.5x greater improvement from AI compared to those with ad hoc workflows.

The prepared companies share three traits:

They documented their processes before buying tools. Every step, every decision point, every exception. If a human cannot follow a written process document and get the same result as the person who usually does the work, AI certainly cannot either.

They treated data as infrastructure. Clean data was not a side project. It was a prerequisite. They invested in data governance, standardization, and quality monitoring before they invested in AI models.

They started with the problem, not the technology. Instead of asking “Where can we use AI?”, they asked “What specific bottleneck costs us the most time or money?” Then they evaluated whether AI was the right solution for that bottleneck. Sometimes it was. Sometimes a simple automation or process change solved it without any AI at all.

This is the approach we recommend when designing a pilot with a clear roadmap, rather than experimenting randomly and hoping something sticks.

How to Tell If AI Is Exposing Your Weak Points

Before you spend another euro on AI tools, run this diagnostic on your own business. It takes an afternoon and costs nothing.

Process audit (2 hours). Pick your three most time-consuming workflows. For each one, write down every step from start to finish. Include the unofficial steps, the workarounds, the “ask Dave because he knows how this works” moments. If you cannot document it completely, AI cannot automate it.

Data quality check (1 hour). Open your CRM. Search for your ten largest clients. Count how many have duplicate records, missing fields, or inconsistent formatting. Do the same for your project management tool. If more than 20% of records have issues, your data is not AI-ready.

Decision mapping (1 hour). For each workflow you documented, highlight every point where someone makes a judgment call. “Is this lead qualified?” “Does this project need senior oversight?” “Should we escalate this issue?” Each judgment call needs explicit criteria before AI can help. If the criteria live in someone’s head, they live nowhere useful.

Exception inventory (30 minutes). List every “special case” in your business. The client who always gets custom pricing. The project type that skips the standard review. The report that needs a different format for one specific stakeholder. Each exception is a potential failure point for AI. You do not need to eliminate them. You need to document them.

What you will likely find is that your business runs on a combination of documented processes and institutional knowledge. The documented parts are candidates for AI. The institutional knowledge parts need to be made explicit first.

This mirrors the workflow automation best practices we see working across dozens of implementations. The companies that get results invest time in process clarity before they invest money in tools.

A Practical Roadmap for AI Readiness

Knowing the problem is half of the work. Here is how to fix it, in order.

Month 1: Document and clean.

Map your core workflows in detail. Standardize your data entry practices. Deduplicate your CRM. Create naming conventions that everyone follows. Unglamorous work, but it is the foundation that determines whether your AI investment returns value or waste.

Set a single standard for how clients, projects, and tasks are named and categorized. Write it down. Train your team on it. Enforce it. One month of discipline here saves six months of AI troubleshooting later.

Month 2: Identify and prioritize.

With clean processes and clean data, identify the specific bottlenecks where AI can help. Rank them by two criteria: time saved per week and implementation complexity. Start with the opportunity that saves the most time with the least complexity.

Calculate the potential return. If a task takes 10 hours per week and AI can reduce it to 2 hours, that is 8 hours recovered. At an average internal cost of, say, €75 per hour, that is €600 per week, or roughly €31,000 per year, from a single workflow improvement.

Month 3: Pilot with guardrails.

Deploy AI on your top-priority workflow with clear success metrics defined before you start. Measure actual time saved, error rates, and team adoption. Set a 90-day evaluation window. If the pilot does not hit your targets, the data will tell you exactly where the breakdown happened, because your processes are now documented and your data is clean.

The companies that follow this sequence consistently see results. The companies that skip straight to Month 3, which is most of them, consistently do not.

The Real AI Readiness Assessment

Every failed AI project teaches the same lesson. The technology was ready. The organization was not.

If 80% of AI projects fail and 70% of those failures trace back to data and process issues, then the highest-ROI investment you can make right now is organizational clarity. Clean data. Documented workflows. Explicit decision criteria. That groundwork determines whether AI multiplies your capacity or multiplies your chaos.

The 14% of companies capturing 70% of AI’s value did not get there by picking better models. They got there by being honest about the state of their operations and doing the boring work to fix them first.

You can start that process today. Our AI Readiness Assessment evaluates your data quality, process maturity, and organizational alignment, then gives you a specific action plan based on where you actually stand.

Take the AI Readiness Assessment and find out whether your business is ready to benefit from AI, or whether AI is about to expose every crack in your foundation.

Thom Hordijk

Founder

Get posts like this in your inbox every week

Weekly insights on AI and automation for B2B service businesses. No hype, just what works.

Related Articles

View all articles

90% of Businesses Don't Use AI. That's the Opportunity.

90% of US businesses still don't use AI in production. The gap between capability and deployment is where the real opportunity lives for service businesses.

Why AI Deployment Needs Consulting Services

49% of businesses piloted AI tools in 2024. Only 4% deployed them at scale. The gap between buying AI and getting value from it is a services problem, not a technology one.

The Dark Side of Vibe Coding for Businesses

One year after the birth of vibe coding, the security incidents are adding up. Learn why speed without safety puts your business data at risk.