Why Security Is the Real AI Adoption Bottleneck

Most AI adoption stalls on security concerns, not cost or talent. Learn how to address data privacy, GDPR, and AI security for business the right way.

The Conversation Nobody Wants to Start

Every week, a business owner tells us the same thing. They want to use AI. They see the potential. They have a list of workflows that are begging for automation.

Then they get to the security question and everything stops.

Where does the data go? Who can see it? What happens if a client finds out their information passed through an AI system? What does GDPR say about this?

These are not irrational fears. For B2B service businesses handling client data, security is a legitimate concern. It is the single biggest reason adoption stalls. More than budget, talent, or resistance to change.

The problem is that most businesses treat security as a reason to wait. It should be a reason to get specific.

Why Security Concerns Hit Service Businesses Hardest

A SaaS company building internal tools can experiment with AI on its own data. The stakes are contained. If something goes wrong, the damage is internal.

Service businesses do not have that luxury. You handle client data every day. Contracts, financial records, project details, personal information. Every workflow you want to automate touches someone else’s sensitive data.

That creates a specific set of fears:

Client confidentiality. If you feed a client proposal into an AI tool, does that content become part of the model’s training data? Could it surface in another user’s output? For a consulting firm or accounting practice, that possibility is enough to shut down the conversation entirely.

Regulatory exposure. GDPR applies to any business processing personal data of EU residents. The fines are real: up to €20 million or 4% of annual turnover. When AI tools process personal data, the compliance questions multiply fast.

Reputational risk. Even a small data breach can cause permanent trust damage. A B2B service business lives on referrals and relationships. One security incident with a client’s data can cost more than the technology ever saved.

These concerns are valid. They are also solvable. The businesses that move forward stop asking “is AI safe?” and start asking “how do we make it safe for this specific workflow?”

What Actually Happens to Your Data

Most AI security fears come from not understanding the technical reality. So let us be specific.

Cloud AI services (ChatGPT, Claude, Gemini). Free versions of these tools may use your inputs to train future models. For business use, every major provider offers paid plans where your data stays private. OpenAI, Anthropic, and Google all offer business plans with written agreements that your data will not be used for training.

Private deployment. For businesses with strict data requirements, AI models can run entirely on your own servers. Your data never leaves your control. This costs more to set up, but for regulated industries, it removes the cloud concern entirely.

Workflow automation tools. Platforms like Make, Zapier, and n8n connect your systems and pass information between them. The key question is where data is stored and for how long. Most platforms let you choose European data storage and control how long data is kept. Check these settings before connecting anything that handles personal data.

The pattern is consistent. Consumer tools have weaker data protections. Business and enterprise tools have strong ones. The first step is simply using the right tier.

GDPR and AI: What You Actually Need to Know

GDPR is the regulation European business owners worry about most when it comes to AI. Rightly so. But the requirements are more specific than most people assume.

Here is what matters for a service business using AI tools:

Lawful basis for processing. You need a legal reason to process personal data through AI. For most B2B workflows, your existing client contracts already cover it. Summarizing meeting notes, generating reports, or drafting communications based on project records all qualify. Document which reason applies to each workflow.

Data Processing Agreements (DPAs). Any AI tool that processes personal data on your behalf needs a written data processing agreement. Every major AI provider offers one. This is not optional. It is a standard part of business procurement. If a vendor cannot provide one, choose a different vendor.

Data minimization. Only send the data the AI actually needs. If you are using AI to draft a follow-up email, it does not need your client’s full financial history. Strip out unnecessary personal data before sending. This is good practice regardless of regulation. It also reduces your exposure if something goes wrong.

Right to explanation. If AI influences decisions about people (hiring, credit, service eligibility), those people may have a right to understand how the decision was made. For most B2B service work (drafting documents, summarizing data, routing tasks), this does not apply. But if you use AI to score or classify people, check with legal counsel.

Transparency. Your clients should know if AI is involved in processing their data. This does not mean every email needs a disclaimer. It means your privacy policy and service agreements should reflect current practices.

None of this is impossible. Most of it is paperwork and configuration. The businesses that treat GDPR compliance as a checklist rather than a blocker move forward much faster.

The Real Risk: Doing Nothing

Here is what does not show up in the security analysis. The cost of not adopting AI is also a security risk.

Your competitors who automate client onboarding have fewer manual handoffs. Fewer handoffs mean fewer chances for human error. Fewer misrouted emails, fewer files saved to the wrong folder, fewer spreadsheets shared with the wrong person.

Manual processes are not inherently secure. They are familiar. There is a difference.

A business that routes every client document through a structured, automated workflow with access controls and tracking is more secure than one that depends on people remembering to follow a process. Automation enforces data handling rules that manual processes cannot.

The businesses closing the adoption gap are not just faster. They are often more secure, because automation forces consistency that manual work never achieves.

A Practical AI Security Checklist

If you want to move forward with AI without creating risk, here is the framework we use with every client. It covers the decisions that actually matter.

1. Classify your data

Before touching any AI tool, categorize the data in your target workflow:

- Public. Marketing content, published reports, general business information. Low risk. Use any reputable AI tool.

- Internal. Project plans, internal communications, operational data. Medium risk. Use business-tier AI tools with DPAs.

- Confidential. Client data, financial records, personal information. High risk. Use enterprise AI tools, private deployment, or anonymize before processing.

Most workflows involve a mix. The classification tells you which parts need extra protection and which can move faster.

2. Choose the right deployment model

Match the data classification to the right approach:

- Public/Internal data: Cloud AI services (business tier) with European data storage

- Confidential data: Enterprise plans with data processing agreements, or private deployment

- Mixed workflows: Process the sensitive parts locally, use cloud AI for the rest

3. Anonymize where possible

Often, you do not need to send real client names or personal details to an AI tool. Drafting a proposal template? Use placeholder names. Summarizing financial data? Aggregate before sending. Removing identifying details is the simplest and most effective security step available.

4. Audit your vendor stack

For each AI tool in your workflow, confirm:

- Where is data processed and stored?

- Is a Data Processing Agreement in place?

- Is European data storage available?

- What is the data retention policy?

- Is data used for model training? (It should not be, on business plans.)

5. Document everything

Write down your AI data handling policy. It does not need to be long. A one-page document that covers which tools you use, what data they process, and what protections are in place will satisfy most client inquiries and regulatory checks.

Why We Built This Way

At Acrosolve, we tested AI tools on our own business before offering them to clients. We spent over a year running AI systems internally. We learned what worked, what broke, and what was pure hype. We know the difference between a vendor demo and a production system because we refused to sell things that did not deliver.

That experience shapes how we approach security. When we recommend an AI tool or build an automation, we have already tested its data handling. We know which platforms honor their agreements, which ones offer real European data storage, and which ones have fine print that should concern you.

We also tell you when a tool is the wrong fit. If a client’s data sensitivity requires private deployment and the budget only supports a cloud solution, we say so. Recommending something we know will create risk is a bad way to build a long-term partnership. Understanding what blocks adoption starts with being honest about these trade-offs.

Start With One Low-Risk Workflow

You do not need to solve every security question before starting. You need to solve the security questions for one specific workflow.

Pick a process where the data is mostly internal or public. Meeting summaries. Content drafting. Internal reporting. These workflows carry low data risk and high time savings. They let you build confidence with AI and develop your security policies before moving to anything sensitive.

Once you have a working system with documented security controls, expanding to higher-risk workflows becomes a structured decision. Not an open-ended debate.

This is the same pilot-first approach that works for AI adoption in general. Start small, prove the value, then scale with confidence. The security layer just adds one more dimension to evaluate.

Security Is the Path Forward

The businesses that adopt AI successfully get specific about security. They classify their data, choose the right tools, document their approach, and start with low-risk workflows that build momentum.

Every month you spend debating AI security in the abstract is a month your competitors spend building secure, automated workflows that make them faster and more reliable.

The tools are ready. The compliance frameworks exist. The question is whether you are willing to move from general worry to specific action.

Ready to figure out which of your workflows can be safely automated? Schedule your free AI Readiness Assessment and we will map your processes against the right security framework for your business.

Thom Hordijk

Founder

Get posts like this in your inbox every week

Weekly insights on AI and automation for B2B service businesses. No hype, just what works.

Related Articles

View all articles

Is AI Finally Built for Small Businesses?

AI used to demand enterprise budgets and IT teams. That's changed. Here's what's different now and how to tell if it's right for your business.

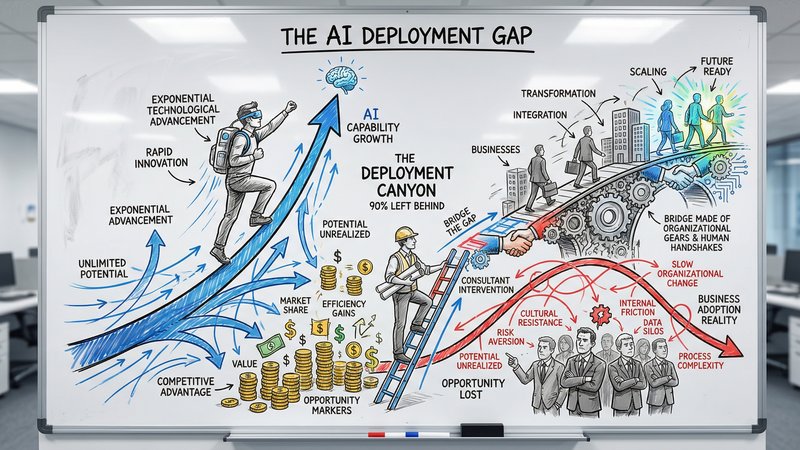

90% of Businesses Don't Use AI. That's the Opportunity.

90% of US businesses still don't use AI in production. The gap between capability and deployment is where the real opportunity lives for service businesses.

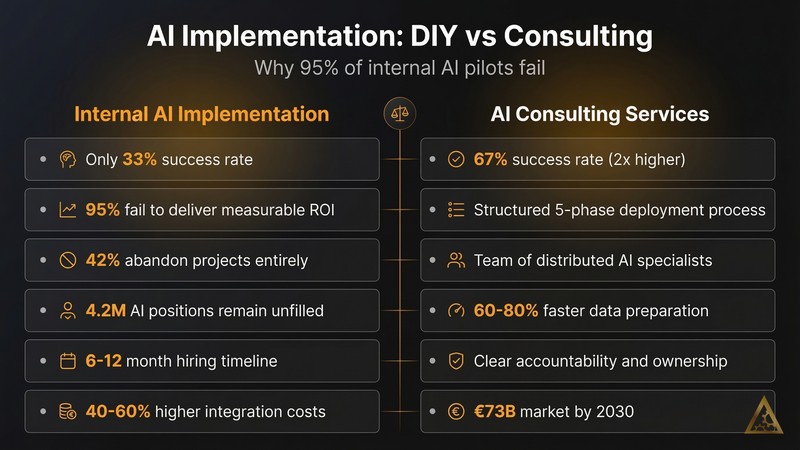

Why AI Deployment Needs Consulting Services

49% of businesses piloted AI tools in 2024. Only 4% deployed them at scale. The gap between buying AI and getting value from it is a services problem, not a technology one.